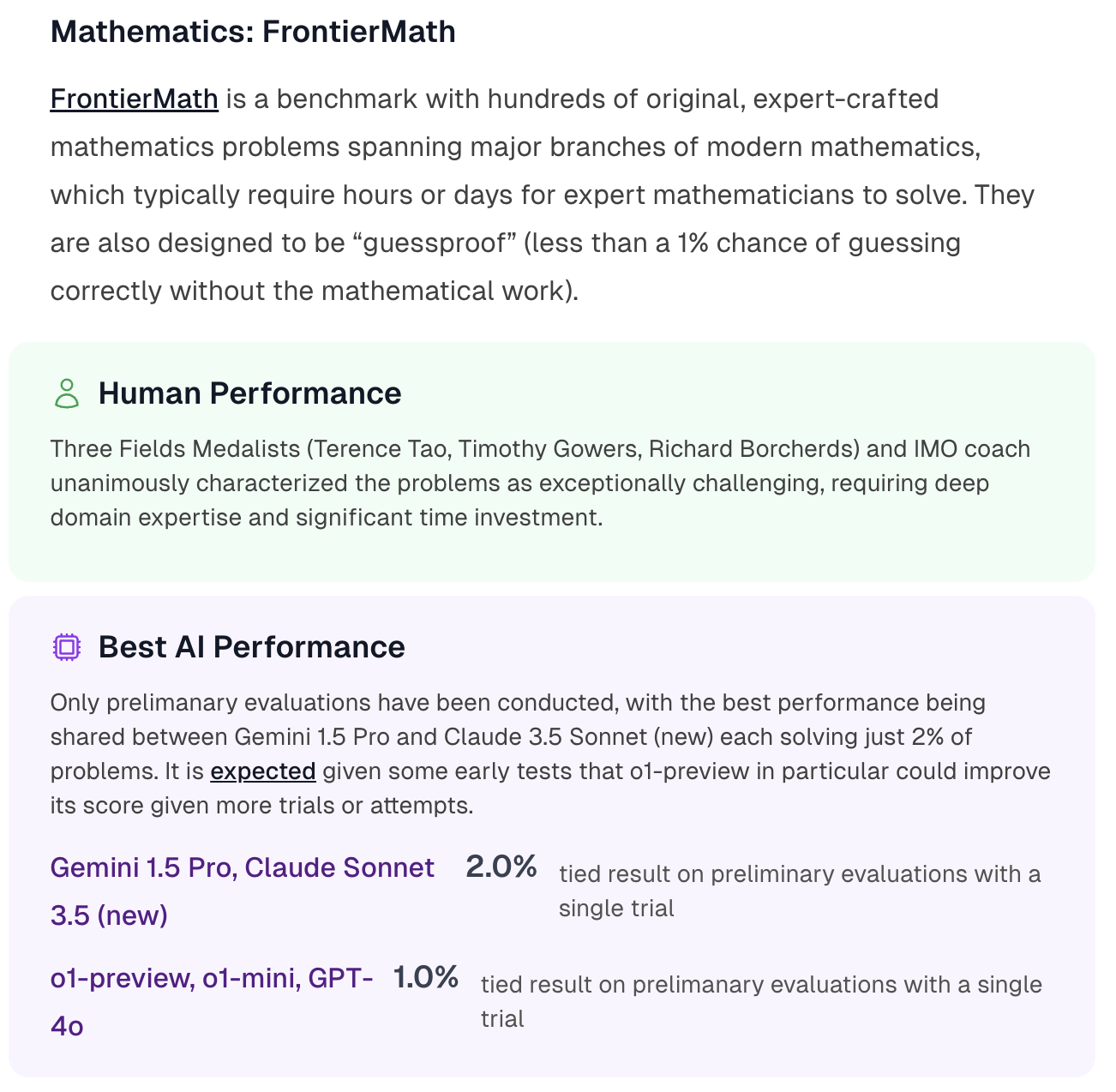

This market matches Mathematics: FrontierMath from the AI 2025 Forecasting Survey by AI Digest.

The best performance by an AI system on FrontierMath as of December 31st 2025.

Resolution criteria

This resolution will use AI Digest as its source. If the number reported is exactly on the boundary (eg. 3%) then the higher choice will be used (ie. 3-5%).

Which AI systems count?

Any AI system counts if it operates within realistic deployment constraints and doesn't have unfair advantages over human baseliners.

Tool assistance, scaffolding, and any other inference-time elicitation techniques are permitted as long as:

There is no systematic unfair advantage over the humans described in the Human Performance section (e.g. AI systems are allowed to have multiple outputs autograded while humans aren't, or AI systems have access to the internet when humans don't).

Having the AI system complete the task does not use more compute than could be purchased with the wages needed to pay a human to complete the same task to the same level

The PASS@k elicitation technique (which automatically grades and chooses the best out of k outputs from a model) is a common example that we do not accept on this benchmark because mathematicians are generally evaluated on their ability to generate a single correct answer, not multiple answers to be automatically graded. So PASS@k would consititute an unfair advantage.

If there is evidence of training contamination leading to substantially increased performance, scores will be accordingly adjusted or disqualified.